Digital workflow management is growing in importance

Automated digital workflows are key to efficient and successful business processes: Departments can focus on the important decisions and always have the latest reports at their disposal, analytical dashboards show up-to-date information, and prediction models are trained with the most fit data available. Availability and accountability of data are crucial for trust in data-driven decision making and planning. Trust the world’s most popular open source workflow orchestration platform to run your workflows like clockwork: Apache Airflow

Apache Airflow is the leading open source workflow orchestration framework. It runs the time-critical processes and data pipelines of hundreds of companies worldwide including pioneers of the data-centric business model like AirBnB, Pinterest, Spotify, or Zalando. Software-as-a-Service giants AWS and Google Cloud Platform offer Airflow as part of their regular service catalog and Astronomer even builds their whole product and company around the open source project.

The success of Airflow is based on the project’s core principles of scalability, extensibility, dynamic, and elegance. The modular micro services architecture built with the Python programming language lends itself to meet any technical requirements one can encounter. Workflows definitions in Airflow are expressed as Python code, which makes the system easy to learn for anyone with some experience in programming. Since Airflow is not specifically a data pipeline tool, there are no boundaries to what business logic can be automated with the system. The extended Airflow ecosystem boosts connector libraries for most any third-party system one can think of including AWS, Azure, Oracle, Exasol, Salesforce, Snowflake. NextLytics can even provide you with SAP integration! And last but not least there’s no license fees or vendor lock-in to be feared because of the permissive Apache software license.

Reliable workflow orchestration is an absolute necessity for the digital organization. Continue reading or download our whitepaper to learn more about how Airflow can contribute to your company’s ongoing success and how NextLytics can support you on that journey.

Whitepaper: Effective workflow management with Apache Airflow 2.0

Digital workflows with the open source platform Apache Airflow

Creating advanced workflows in Python

In Apache Airflow the workflows are created with the programming language Python. The entry hurdle is low. In a few minutes you can define even complex workflows with external dependencies to third party systems and conditional branches.

![]()

Schedule, execute and monitor workflows

The program-controlled planning, execution and monitoring of workflows runs smoothly thanks to the interaction of the components. Performance and availability can be adapted to even your most demanding requirements.

![]()

Best suited for Machine Learning

Here, your Machine Learning requirements are met in the best possible way. Even their complex workflows can be ideally orchestrated and managed using Apache Airflow. The different requirements regarding software and hardware can be easily implemented.

![]()

Reliable orchestration of third-party systems

Already in the standard installation of Apache Airflow numerous integrations to common third party systems are included. This allows you to realize a robust connection in no time. Without risk: The connection data is stored encrypted in the backend.

![]()

Ideal for the Enterprise Context

The requirements of start-ups and large corporations are equally met by the excellent scalability. As a top level project of the Apache Software Foundation and with its origins at Airbnb, the economic deployment on a large scale was intended from the beginning.

![]()

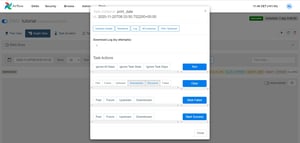

A glance at the comprehensive intuitive web interface

A major advantage of Apache Airflow is the modern, comprehensive web interface. With role-based authentication, the interface gives you a quick overview or serves as a convenient access point for managing and monitoring workflows.

The orchestration of third-party systems is realized through numerous existing integrations.

-

Apache Hive

-

Kubernetes Engine

-

Amazon DynamoDB

-

Amazon S3

-

Amazon SageMaker

-

Databricks

-

Hadoop Distributed File System (HDFS)

-

Bigtable

-

Google Cloud Storage (GCS)

-

Google BigQuery

-

Google Cloud ML Engine

-

Azure Blob Storage

-

Azure Data Lake

-

...

The workflow management platform for your demands for your demands

‣ Flexibility by customization

The adaptability is given by numerous plugins, macros and individual classes. Since Airflow is completely based on Python, the platform is theoretically changeable up to the basics. Adapt Apache Airflow to your current needs at any time.

‣ Truly scalable

Scaling with common systems like Celery, Kubernetes and Mesos is possible at just any time. In this context a lightweight containerization can be installed.

The workflow management platform is quickly available without license fees and with minimal installation effort. You can always use the latest versions to the full extent without any fees.

As the de facto standard for workflow management, the Airflow Community not only includes users, but the platform also benefits from dedicated developers from around the world. Current ideas and their implementation in code can be found online.

‣ Agility by simplicity

The workflow definition is greatly accelerated by the implementation in Python and the workflows benefit from the flexibility offered. In the web interface with excellent usability, troubleshooting and changes to the workflows can be implemented quickly..

State-of-the-art workflow management with Apache Airflow 2.X

- Fully functional REST API with numerous endpoints for two-way integration of Airflow into different systems such as SAP BW

- Functional definition of workflows to implement data pipelines andfor improved data exchange between tasks in the workflow using the TaskFlow API

- Just-in-time scheduling based on change detection or availability of required data itemsInterval-based checking of an starting condition with Smart Sensors, which keep the workload of the workflow management system as low as possible

- Dynamic task creation and scaling based on metrics of the current data flow

- Improved business logic monitoring through integration with data observability frameworks

- Increased usability in many areas (simplified Kubernetes operator, reusable task groups, automatic update of the web interface)

Have a chat with our expert!

FAQ - Apache Airflow

These are some of the most frequently asked questions about Apache Airflow

- Scalability: Easily deployed on large systems like Kubernetes and Celery.

- Customizable: Extendable through plugins, macros, and custom classes.

- Integration: Numerous integrations with third-party systems like AWS, Google Cloud, and SAP.

- User-friendly Web Interface: With role-based authentication and easy access to workflows

![]()

Automatic file transfer

Due to the large number of integrations to other systems, the transfer of data and files can be easily realized. So-called sensors are used to check start conditions such as the existence of a file at periodic intervals. For example, a CSV file can be loaded from the cloud service into a database. In this way, unnecessary manual work can be automated.

![]()

Triggering of external process chains via API

If there is no easy way for system integration per task operator (and the community has not provided a solution yet), the connection via APIs and HTTP Requests is still possible at any time. For example, a process chain in SAP BW can be started and tracked synchronously. Conversely, system integration through Airflows excellent API is possible without any problems.

![]()

Executing ETL workflows

Your data management will benefit the most from Apache Airflow. It has never been easier to combine different, structured data sources into one target table. Originally Airflow was developed according to the needs of Extract-Transform-Load (ETL) workflows and offers smart concepts like reusable workflow parts to build even complex workflows fast and robust.

![]()

Implement Machine Learning process

Not only during the development of a Machine Learning application many processes which are ideally implemented as a workflow exist. The productive execution of a model can also be implemented as a workflow and thus provide, for example, current forecast data at fixed intervals. The data preparation and training of the current model version can be easily realized with Airflow.

Would you like to know more about Machine Learning?

Find interesting articles about this topic in our Blog

Apache Airflow Updates 2025: A Deep Dive into Features Added After 3.0

2025 is over and with that, it is a good opportunity to review and assess the changes the year has...

Apache Airflow 3.0: Everything you need to know about the new release

Apache Airflow 3.0 has been publicly available since April 22, 2025. It is the first major release...

Ingesting Data into Databricks Unity Catalog via Apache Airflow with Daft

Data Lakehouse is the future architectural pattern to enable large-scale enterprise data...

/Logo%202023%20final%20dunkelgrau.png?width=221&height=97&name=Logo%202023%20final%20dunkelgrau.png)